This blog post seeks to challenge that notion by exploring the foundational Principles of Deep Learning. The insights presented here are heavily inspired by the comprehensive scholarly survey, "A Survey on Statistical Theory of Deep Learning: Approximation, Training Dynamics, and Generative Models," authored by Namjoon Suh and Guang Cheng and published in the Annual Review of Statistics and Its Application.

1. Introduction

“… The history of deep learning is a story of progress in three fundamental principles: the existence of a neural network that can solve the problem, the design of diverse architectures, and the training dynamics to find the solution. …”

2. The Existence of a Neural Network: Can it solve the problem?

Coming soon ...

2.1 Fully Connected Neural Networks

Coming soon ...

2.2 The Space Triplet

Coming soon ...

2.3 Fixed Approximation Error

Coming soon ...

2.4 The Universal Approximation Theorem

The Universal Approximation Theorem states that a feedforward neural network with a single hidden layer and a finite number of neurons can approximate any continuous function on a compact subset of the real numbers $\mathbb{R}^n$, given an appropriate activation function. (GeeksforGeeks)

2.5 The Advantage of Depth

Coming soon ...

2.6 The Limits of Width

Coming soon ...

2.7 Breaking the Curse of Dimensionality

Coming soon ...

3. Diverse Architectures: Beyond Simple Connections

“… Neural networks, the mathematical abstractions of our brain, lie at the core of this progression. Nevertheless, amid the ongoing renaissance of artificial intelligence (AI), neural networks have acquired an almost mythical status, spreading the misconception that they are more art than science. …”

3.1 Convolutional Neural Networks

Coming soon ...

3.2 Recurrent Neural Networks

Coming soon ...

3.3 Recurrent Convolutional Neural Networks

Coming soon ...

3.4 Graph Neural Networks

Coming soon ...

3.5 Transformer Architectures

Coming soon ...

3.6 Hybrid Transformer Architectures

Coming soon ...

3.7 Large Language Models

Coming soon ...

4. Training Dynamics: How do we find the solution?

Coming soon ...

4.1 Loss Function

Coming soon ...

4.2 Gradient Descent

Coming soon ...

4.3 Training Regimes

Coming soon ...

4.4 Half precision training

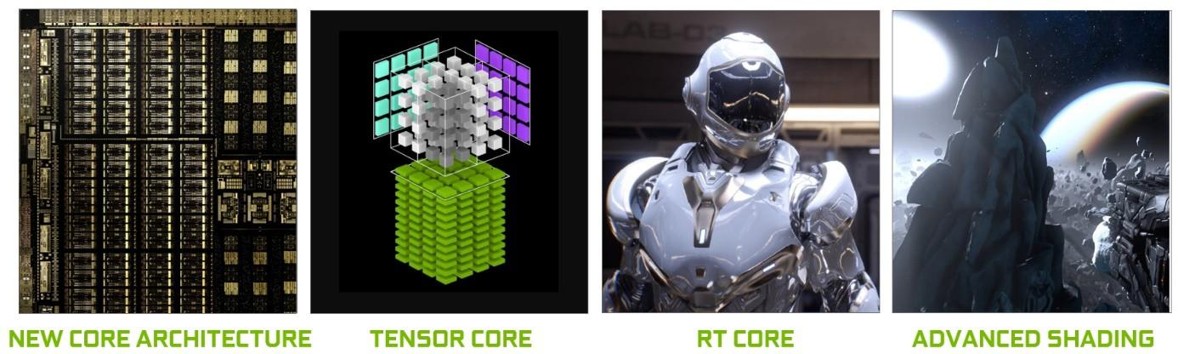

Tensor Cores

Tensor Cores enable mixed-precision computing, dynamically adapting calculations to accelerate throughput while preserving accuracy and providing enhanced security. The latest generation of Tensor Cores are faster than ever on a broad array of AI and high-performance computing (HPC) tasks. From 4X speedups in training trillion-parameter generative AI models to a 30X increase in inference performance, NVIDIA Tensor Cores accelerate all workloads for modern AI factories. (NVIDIA)

4.5 SOTA scaling techniques

Accumulate Gradients

Gradient Clipping

Stochastic Weight Averaging (SWA)

Batch Size Finder

Learning Rate Finder

5. Conclusion

Coming soon ...